ARIS

(workpackage 1 - loria results)

The goal of the ARIS project was to provide new

and innovative AR-technologies for e-(motion)-commerce application,

where the

products can be presented in the context of their future environment.

Two

system approaches have been developed:

1.

An

interactive desktop system, where the end-user can easily integrate 3D

product

models (e.g. furniture) into a set of images of his real environment,

taking

consistent illumination of real and virtual objects into account.

- A mobile AR-unit, where 3D product models can be directly

visualized on a real site and be discussed with remote participants,

taking shared augmented technologies into account. Both systems have

been implemented based on the same technology infrastructure and tested

on well defined application scenarios with end-users.

Our

contribution concerns real-time camera tracking. Several methods have

been

developed in order to obtain fast, accurate and robust tracking over

long

sequences :

markerless

tracking using planar structures

During the first year of the project, we

proposed a real time camera tracking system for scenes which contain

planar

structures. The type of scenes which can be considered with our method

is

large: this is commonly true for indoor environments where the ceiling

or the

ground plane are often visible. This is also often true for urban

outdoor

scenes because the façades of the buildings, the roads and the squares

are

often visible and can be used for registration.

The main idea of the algorithm is to compute

the homographies induced by each visible plane. As the homography is a

function of the camera parameters, the camera pose can be inferred from the set

of homographies induced by the visible planes. This first prototype

allows us

to reach an approximate processing rate of 16 frames per second.

videos

|

|

|

|

| Indoor multiplanar tracking + augmentation |

Outdoor multiplanar tracking

+ augmentation |

||

papers

- Gilles Simon and Marie-Odile Berger. Pose Estimation for Planar Structures. IEEE Computer Graphics and Applications, 22(6):46-53, November 2002. [Abstract] [bibtex-entry]

- Gilles

Simon and Marie-Odile

Berger. Real time registration of known or recovered

multi-planar

structures : application to AR.

In 13th British Machine Vision Conference 2002 - BMVC'2002,

Cardiff, United Kingdom,

pages 567-576,

September 2002.

[Abstract] [bibtex-entry]

[Abstract] [bibtex-entry] - Gilles

Simon and Marie-Odile

Berger. Reconstructing while registering : a novel approach for

markerless augmented reality.

In International Symposium on Mixed and Augmented Reality -

ISMAR'02, Darmstadt, Germany,

September 2002.

[Abstract] [bibtex-entry]

[Abstract] [bibtex-entry]

model

selection

Even when the precision of the viewpoints is

improved by considering several planes,

fluctuations in the parameters are often observed and may lead to

unpleasant

visual impressions such as jittering or sliding when augmented scenes

are

considered. These fluctuations are especially conspicuous when the

camera

motion is small because of noise and imprecision in computing the points coordinates. In the past, several

papers used Kalman filtering for prediction and stabilization task.

However,

the use of a Kalman filter is not

always advantageous for AR. This is because a low

order dynamical model of human motion may

not be always appropriate except under

very constraint scenarios.

Following Matsunaga and Kanatani [kanatani00]

and [torr98] we investigate the use of

motion model selection to reduce

fluctuations of the camera parameters and to improve the visual

impression of

the augmented scene. The underlying idea in model selection is as

follows: a

higher order motion model fits any data set more accurately than a

lower order

model. However, high order models fit part of the random noise they are

supposed to remove. Thus, a high order model, although accurate, is

less stable

to random perturbations in the data. A good motion model must strike

the right

balance between accuracy and stability.

The model selection principle demands that the model should

explain the

data very well and at the same time have a simple structure.

Within

the ARIS project, we decide to use together the model selection

strategy

and the multi-planar calibration in order to improve the stability and

the

accuracy of the estimated the

parameters. There are different branches using model selection, but

there is no

such successful criterion in general.

For this reason, we try to compare different model selection and

we especially consider the criteria which

involve the covariance matrix on the estimated parameters and the

Fisher

information matrix. Indeed, often, criteria such as Akaike are only

asymptotic

approximations of a criterion which

includes the covariance or the

information matrix. So, we hope that such criteria will improve the

model

selection.

As expected, the experiments which were

conducted on synthetic images proved that the criteria which involved

the

covariance matrix or the Fisher information matrix gave the best

results. The

experiments using real image sequences taken with a

turntable also proved that the accuracy of the recovered camera

pose is improved by model selection. In

addition, model selection produces smoother trajectory and better

visual

impression. For the closed sequence, Figure

1 exhibits the distance from the

current camera pose to the initial camera pose. The three curves

respectively

plot the actual camera pose, the pose recovered without model selection

and the

pose recovered when model selection is used.

This graphic proves that the use of model selection improves the

accuracy of the viewpoint and reduces noticeably the drift problems

that are

common when long sequences are considered.

Figure 1: comparison of tracking

performance with and without model selection.

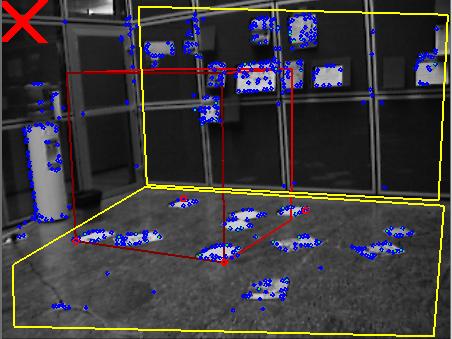

Results on a miniature scene and on a real-size indoor

sequence acquired using a hand held camera are shown below. In the

second example, due to the brightness of the

floor, some sheets of paper were put down on it to make easier the

tracking

process. During the sequence, two panoramic motions were realized, one

with a

tripod and the other without

a tripod. Both are correctly

labelled by the

model selection process (The red cross indicates the stationary model,

the

green circle corresponds to the panoramic rotation, and the blue square

to the

general model).

To

conclude, the use of model selection with various criteria proved that

criterion involving information on the covariance of the estimated

parameters

are well suited to camera stabilization. They allow us to produce

smoother

trajectories and better visual impression. In addition,

model selection reduces noticeably the

drift problems that are common when long sequences are considered.

[kanatani00] C. Matsunaga and K. Kanatani}.

Calibration of a Moving Camera Using a Planar Pattern: Optimal

Computation, Reliability

Evaluation and Stabilization by Model Selection. In Proceedings of

6th

European Conference on Computer Vision, Trinity College Dublin

(Ireland),

pages 595—609, 2000.

[torr98] P.H.S. Torr, A.W. Fitzgibbon and A.

Zisserman. Maintaining Multiple Motion Model Hypotheses over Many Views

to

Recover Matching and Structure. In Proceedings of 6th International

Conference on Computer Vision, Bombay (India), pages 485—491, 1998.

videos

|

|

|

|

| Without

model selection |

With

model selection |

A real-size indoor sequence |

|

papers

- Javier-Flavio Vigueras-Gomez, Marie-Odile

Berger, and Gilles

Simon. Iterative Multi-Planar Camera Calibration : Improving

stability

using Model Selection.

In Eurographics Association, editor, Vision, Video and Graphics

(VVG)'03, Bath, UK,

July 2003.

[Abstract] [bibtex-entry]

[Abstract] [bibtex-entry]

hybrid

tracking

As pure vision-based tracking methods cannot

usually keep up with fast or abrupt user movements, a hybrid approach

which

combines the accuracy of vision-based methods and the

robustness of

inertial tracking systems has been studied during the last year.

Some

systems have been proposed in the past that combine vision and sensors.

In

[you01], an extended Kalman filter combines landmark tracking and

inertial

navigation. However, extended Kalman filters require good measurement

models

which are difficult to obtain in AR where the user is generally free of

his

motions. More often, sensors are used

as prediction devices to help image feature detection:

in [state96], a magnetic sensor is used to

help landmark search, whereas in [klein02] an inertial sensor is used for detecting edges corresponding to

a wire-frame model of the scene.

Our

approach is close to these works, as we

also use an inertial sensor to improve the matching stage. However, we

brought

significant improvements to these works:

- the inertial device is not used systematically, but only when needed (generally after a large rotation occurred),

-

expected sensor errors

are taken into account at each

step of the algorithm. Propagation of these errors enables us to obtain

refined

research regions of the image features

that

are much more relevant than arbitrary rectangles;

-

sensor data are not only

used to predict the position

of the features, but also to refine the matching process and obtain a

higher

number of correct matches,

-

the

synchronization problem is treated, whereas rarely

mentioned in previous works: we show

that the synchronization delay between image and sensor acquisitions

varies

over time, which requires to perform real-time re-synchronizations

during the

tracking process. We propose a reliable

method for this purpose;

Our

marker-less tracking system is used as a basis of the

hybrid algorithm. The

inertial sensor robustness allows us to

maintain tracking during long sequences. However, the process is incremental and may progressively diverge

because of successive approximations, or even stop when matching fails.

For that

reason, we finally proposed to add markers into the scene, whose

positions are

known. These markers are used to initialize or re-initialize the

tracking

process in case of matching failure or

divergence. Once the initial viewpoint is known, the hybrid system is

used for

tracking and the markers are not used anymore (they can disappear from

the user

field of view). As a result we get a system that is really robust

against fast

camera motions, but also very accurate (no jittering effect) as the

whole

texture information contained on the planar surfaces is used for

tracking.

Moreover, the user is free of his motions. It has been tested on real

and

miniature scenes and it runs at 10 fps on a 1.7 Ghz laptop.

Our

implementation is based on an inertial sensor provided by XSens (model

MT9). We

accurately measured the sensor errors using a motorized pan/tilt unit.

These

errors are handled in the tracking process. In addition, we provided a

tool to perform the sensor/camera device

calibration: indeed, to estimate camera rotations from sensor

rotations, it is

necessary to know the rigid transformation between these two devices. Our tool is based on automatic detection of

a calibration target for several positions of the sensor / camera

device, and a

classical hand-eye calibration is performed [tsai89].

[klein02] G.

Klein and T. Drummond. Tightly Integrated Sensor Fusion for Robust

Vision

Tracking. In Proceedings of the

British Machine Vision Conference, BMVC 02, Cardiff, pages 787—796,

2002.

[state96] A. State, G. Hirota, D. Chen, W.

Garett and M. Livingston. Superior Augmented Reality Registration by

Integrating Landmark Tracking and Magnetic Tracking. In Computer

Graphics

(Proceedings Siggraph New Orleans), pages 429—438, 1996.

[tsai89] R. Y. Tsai and R. K. Lenz.A New technique

for Fully Autonomous and Efficient 3D Robotics Hand/Eye Calibration. In

IEEE

Transactions on Robotics and Automation 5(3):345—358, June 1989.

[you01] S.

You and U. Neumann. Fusion of vision

and gyro tracking for robust augmented reality registration. In Proc.

IEEE

Conference on Virtual Reality, pages 71—78, March 2001.

videos

|

|

|

|

| Purely

vision-based tracking with an abrupt motion |

Same

sequence using sensor prediction |

A real-size sequence |

|

|

Presentation of the

complete mobile ARIS system |

|

|

|

| Ismar'04 demo : scene reconstruction, hybrid tracking and (to compare) tracking using markers only | ||

papers

- Michael Aron, Gilles Simon and Marie-Odile Berger. Handling uncertain sensor data in vision-based camera tracking. In International Symposium on Mixed and Augmented Reality - ISMAR'04, Arlington, VA, November 2004.